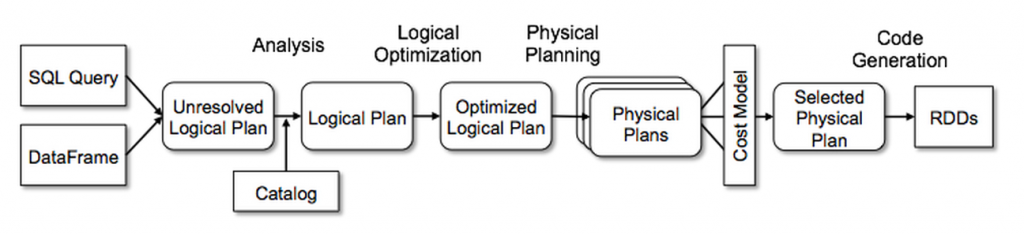

"Catalyst handles the 'What' - it parses your messy SQL or DataFrame code, applies logical rules, and figures out the absolute best physical plan to get your data."

"Encoders are the translators. They bridge the gap between your standard Scala/Java objects and Tungsten's raw binary world. They instantly pack your objects into UnsafeRows."

"When using standard Scala functions like .map(), Spark is forced to stop the engine, use the Encoder to unpack the binary data into a heavy JVM object, run your math, and then re-pack it back into raw bytes."

Catalyst Optimizer parses SQL or DataFrame code, creating an optimal physical plan for data execution. Tungsten executes this plan by generating raw bytecode and managing memory. Encoders serve as translators between human-readable Scala/Java objects and Tungsten's binary format. When using standard Scala functions like .map(), Spark must unpack and repack data, incurring a serialization tax that can significantly slow down processing, especially with large datasets.

Read at Medium

Unable to calculate read time

Collection

[

|

...

]