"The landscape of large language models (LLMs) is undergoing a fundamental transformation toward agentic intelligence, where models can autonomously perceive, plan, reason, and act within complex and dynamic environments. This paradigm shift moves beyond traditional static imitation learning toward models that actively learn through interaction, acquire skills beyond their training distribution, and adapt their behavior based on experience. Agentic intelligence represents a critical capability for the next generation of foundation models, with transformative implications for tool use, software development, and real-world autonomy."

"Kimi-K2 stands at the forefront of this revolution. As a 1.04 trillion-parameter Mixture-of-Experts (MoE) language model with 32 billion activated parameters, Kimi-K2 was purposefully designed to address the core challenges of agentic capability development. The model achieves remarkable performance across diverse benchmarks: 66.1 on Tau2-bench, 76.5 on ACEBench (en), 65.8 on SWE-bench Verified, 53.7 on LiveCodeBench v6, 75.1 on GPQA-Diamond."

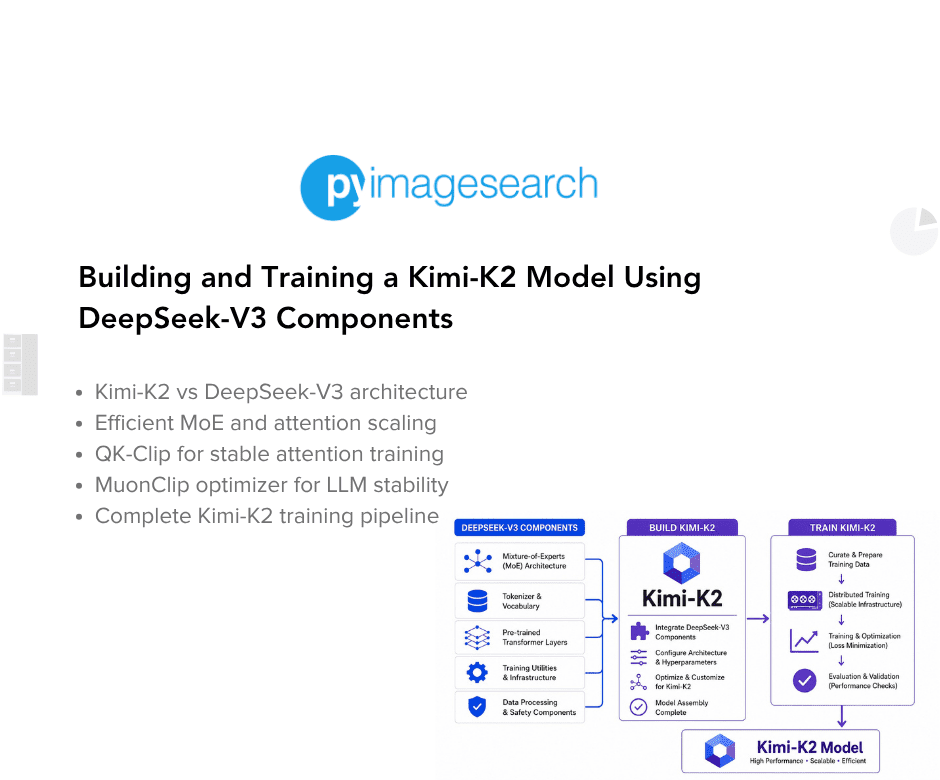

"On the LMSYS (Large Model Systems Organization) Arena leaderboard, Kimi-K2 ranks as the top open-source model and 5th overall, competing closely with Claude 4 Opus and Claude 4 Sonnet. In this lesson, we dive deep into the technical innovations behind Kimi-K2, focusing on its architectural differences from DeepSeek-V3, the revolutionary MuonClip optimizer, and training data improvements. We also provide a complete implementation guide using DeepSeek-V3 components as building blocks."

"While Kimi-K2 builds on DeepSeek-V3's architecture, several strategic modifications were made to optimize agentic capabilities and inference efficiency. Understanding these architectural differences is crucial for implementing the model effectively ( Table 1). The most significant architectural departure lies in Kimi-K2's aggressive sparsity scaling. Through carefully controlled small-scale experiments, the Kimi team de"

Agentic intelligence shifts large language models from static imitation toward systems that learn through interaction, acquire skills beyond training distributions, and adapt behavior from experience. This capability is positioned as essential for next-generation foundation models, enabling more effective tool use, software development, and real-world autonomy. Kimi-K2 is presented as a leading agentic model, built as a 1.04 trillion-parameter Mixture-of-Experts system with 32 billion activated parameters. It reports strong results across multiple benchmarks, including Tau2-bench, ACEBench, SWE-bench Verified, LiveCodeBench, and GPQA-Diamond. On the LMSYS Arena leaderboard, it is described as the top open-source model and 5th overall. The model is said to incorporate architectural changes from DeepSeek-V3, including aggressive sparsity scaling, a MuonClip optimizer, and improved training data, along with an implementation guide using DeepSeek-V3 components.

#agentic-intelligence #mixture-of-experts-moe #llm-optimization #model-architecture #benchmark-evaluation

Read at PyImageSearch

Unable to calculate read time

Collection

[

|

...

]