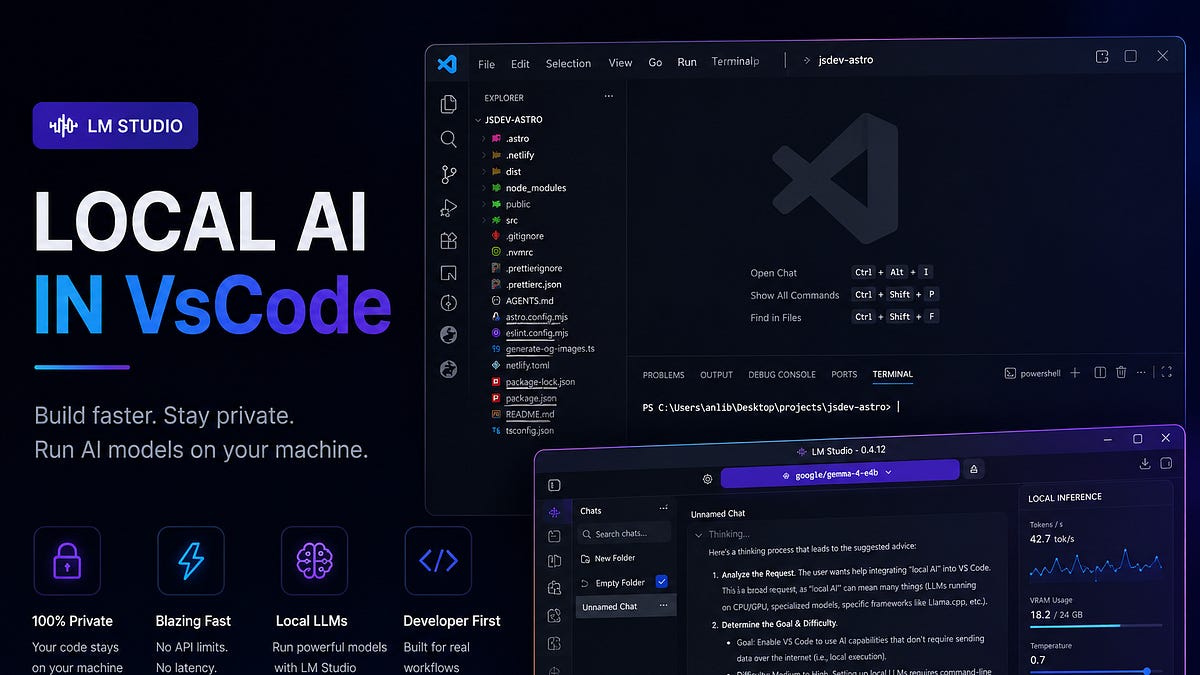

"Instead of relying entirely on external services, developers can now run powerful language models directly on their own machines and integrate them into their editor, terminal, and everyday workflow. This approach offers several advantages: Local AI environments solve many of these issues. Instead of sending requests to external servers, everything runs directly on the developer's machine. That means projects remain private, inference works offline, and the environment becomes fully customizable."

"Of course, there is a tradeoff. Local language models require significantly more hardware resources than traditional developer tools. Running local models is heavily dependent on system performance. A weak machine may technically load a model, but the actual experience can become frustrating very quickly. Response generation slows down, inference becomes inconsistent, and larger projects become difficult to work with."

"Mid-range systems usually provide a much more comfortable experience, especially for small and medium-sized projects. The most important hardware components are: Operating system optimization also plays a surprisingly large role. Different Windows builds can noticeably affect model performance and inference speed."

"Most JavaScript developers already have: installed and configured. Because of that, there is no reason to spend half the article installing VS Code and Node.js from scratch. Instead, it makes more sense to jump directly into the interesting part: building a fully local AI workflow with LM Studio. The next step is installing LM Studio. Download: LM Studio acts as the foundation of the entire local AI workflow."

Local-first AI development shifts from cloud-only workflows to running language models directly on a developer’s own machine. This avoids sending requests to external servers, keeping projects private and enabling offline inference. The local environment can be fully customized and integrated into everyday tools like editors and terminals. The approach has tradeoffs because local models need substantially more hardware resources than traditional developer tools. Performance depends heavily on system capability, and weak machines can lead to slow response generation, inconsistent inference, and difficulty handling larger projects. Mid-range systems tend to provide a more comfortable experience for small and medium-sized projects. Hardware needs and operating system optimization both affect model performance, including differences across Windows builds. The setup can leverage existing JavaScript tooling and focus on installing LM Studio as the foundation for the local AI workflow.

Read at Substack

Unable to calculate read time

Collection

[

|

...

]