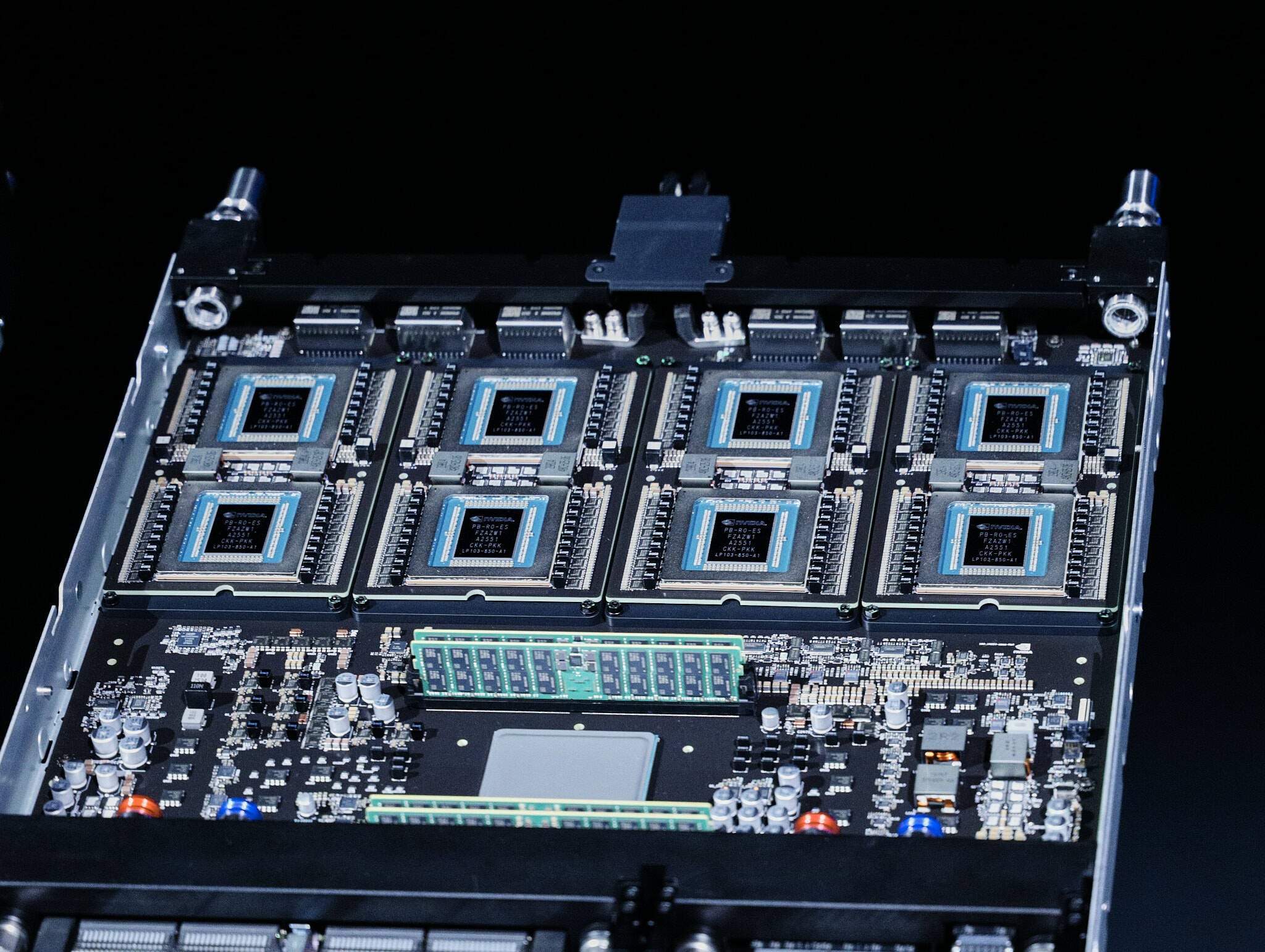

"The company's newly announced Groq 3 LPX racks, which pack 256 LP30 language processing units (LPUs) into a single system, show time-to-market was the reason Nvidia bought rather than built. We're told the chip is based on Groq's second-gen LPU tech with a handful of last-minute tweaks made just before tapping out at Samsung's fabs."

"One of the defining characteristics of SRAM-heavy architectures from Groq and its rival Cerebras is that they are very fast when running LLM inferencing workloads, routinely achieving generation rates exceeding 500 and even 1000 tokens a second. The faster Nvidia can generate tokens, the faster code assistants and AI agents can act."

"The idea is that by letting "reasoning" models generate more "thinking" tokens, they can produce smarter, more accurate results. So, the faster you can generate tokens, the less of a latency penalty test-time scaling incurs. On stage at GTC, Huang suggested that this high-performance and low-latency inference provider could eventually charge as much as $150 per million tokens."

Nvidia's acquisition of Groq was driven by time-to-market urgency rather than technological necessity. The Groq 3 LPX racks, featuring 256 LPUs, are based on Groq's second-generation technology with minimal modifications. The chips lack Nvidia's proprietary NVLink interconnect, NVFP4 hardware support, and initial CUDA compatibility, indicating Nvidia prioritized rapid deployment. SRAM-heavy architectures excel at LLM inference, achieving token generation rates exceeding 500-1000 tokens per second. This speed enables test-time scaling, where reasoning models generate additional thinking tokens to produce more accurate results. Huang suggested premium pricing of $150 per million tokens for this high-performance, low-latency inference capability.

#nvidia-groq-acquisition #ai-inference-acceleration #token-generation-speed #test-time-scaling #sram-architecture

Read at Theregister

Unable to calculate read time

Collection

[

|

...

]