"Chips are being redesigned because efficiency determines how fast intelligence can scale. Energy becomes central because it sets the ceiling on how much intelligence can be produced at all."

"All this inference stuff is incredibly threatening to Jensen, because it's all efficiency-driven. He's desperately trying to find a way to extend the franchise into inference."

"Energy efficiency is no longer a nice-to-have, it's a must-have. Electricity is physically the limiting factor in how much artificial intelligence can be deployed and scaled across the industry."

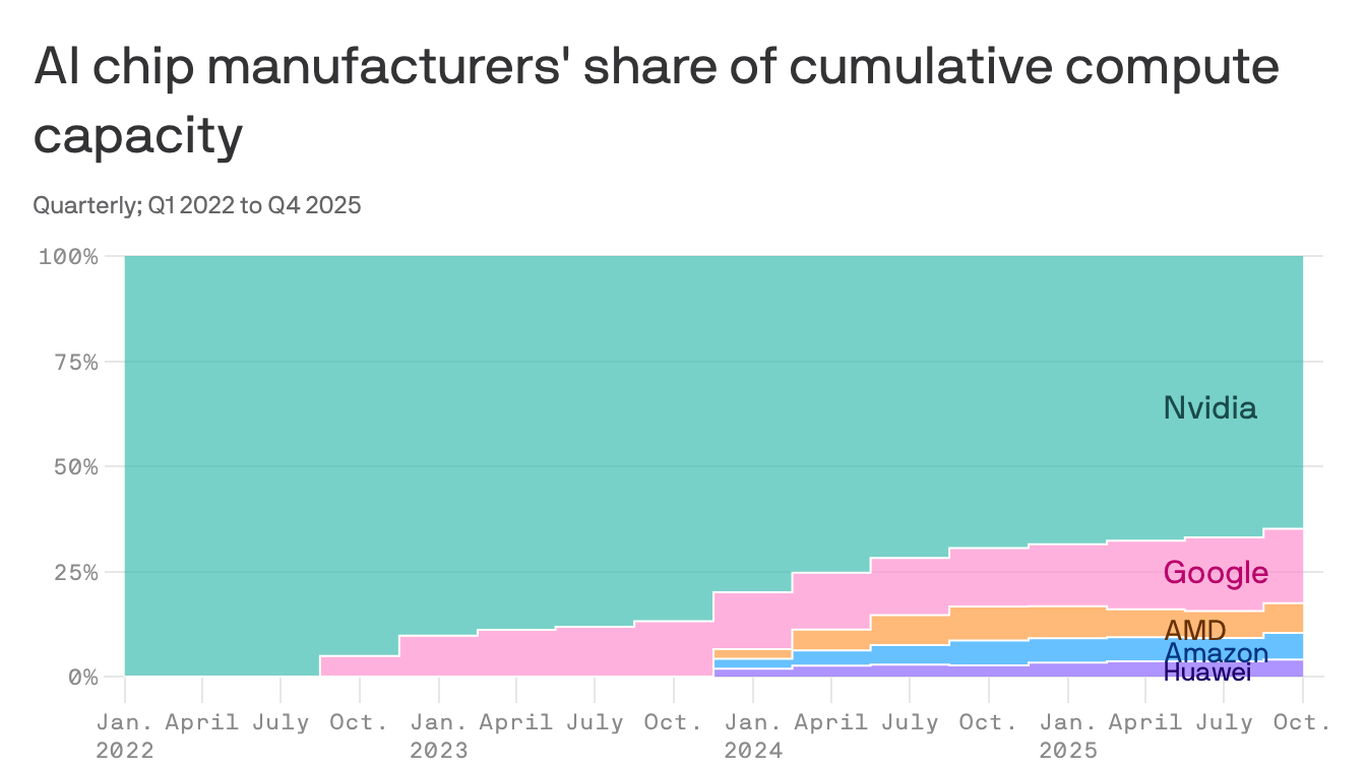

Nvidia posted record sales and earnings driven by massive data center orders from major tech companies. However, the company's market share has dropped significantly from 100% in early 2022 to 65% by late 2024. Energy efficiency has become central to AI development, as chips determine how effectively electricity powers data centers. Nvidia faces a strategic challenge as the industry shifts from AI training to inference, where efficiency-driven approaches threaten its traditional competitive advantage. Energy efficiency has transformed from a peripheral concern to a fundamental requirement for scaling artificial intelligence capabilities.

#nvidia-market-dominance #ai-chip-efficiency #data-center-power-consumption #ai-training-vs-inference #energy-constraints-in-ai

Read at Axios

Unable to calculate read time

Collection

[

|

...

]