#test-suite-management

#test-suite-management

[ follow ]

#devops #observability #cicd #ai #software-development #devsecops #test-automation #claude-code #pytest

fromDevOps.com

1 day agoIs Your AI Agent Secure? The DevOps Case for Adversarial QA Testing - DevOps.com

The most dangerous assumption in quality engineering right now is that you can validate an autonomous testing agent the same way you validated a deterministic application. When your systems can reason, adapt, and make decisions on their own, that linear validation model collapses.

Information security

fromTheregister

2 weeks agoJunior disobeyed orders, tried untested feature during demo

Lydia noticed the machine's battery was running low and told two other team members. The more senior went to fetch the backup battery, while the junior team member suggested a quicker method that Lydia firmly rejected.

Gadgets

fromLoopwerk

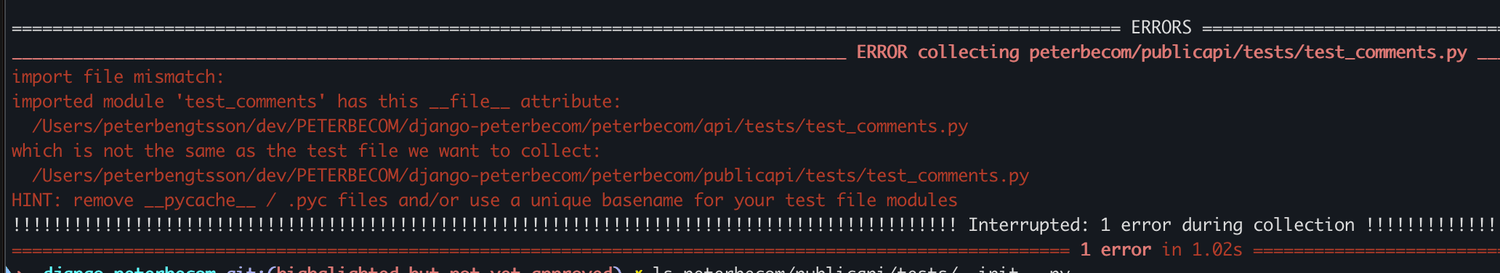

2 months agoDjango's test runner is underrated

Readable failures. When something breaks, I want to understand why in seconds, not minutes. Predictable setup. I want to know exactly what state my tests are running against. Minimal magic. The less indirection between my test code and what's actually happening, the better. Easy onboarding. New team members should be able to write tests on day one without learning a new paradigm.

Web frameworks

Software development

fromDevOps.com

1 month agoCan QA Reignite its Purpose in the Agentic Code Generation Era? - DevOps.com

AI now generates 41% of all code with 84% of developers adopting it, requiring deterministic execution, isolated environments, and convergent correctness signals for effective agentic QA.

fromSecurityWeek

1 month agoHow to Eliminate the Technical Debt of Insecure AI-Assisted Software Development

This extends to the software development community, which is seeing a near-ubiquitous presence of AI-coding assistants as teams face pressures to generate more output in less time. While the huge spike in efficiencies greatly helps them, these teams too often fail to incorporate adequate safety controls and practices into AI deployments. The resulting risks leave their organizations exposed, and developers will struggle to backtrack in tracing and identifying where - and how - a security gap occurred.

Artificial intelligence

fromDevOps.com

1 month agoHarness Readies Resilience Testing Platform to Make Applications More Robust - DevOps.com

The Harness Resilience Testing platform extends the scope of the tests provided to include application load and disaster recovery (DR) testing tools that will enable DevOps teams to further streamline workflows.

DevOps

DevOps

fromSitePoint Forums | Web Development & Design Community

2 months agoWhat is the best way to differentiate between performance testing and a true reliability test system?

Prioritize fault tolerance before resource optimization; automate long-term reliability tests with staged, parallel, and targeted strategies to preserve CI/CD velocity.

Software development

fromInfoQ

1 month agoHow a Small Enablement Team Supported Adopting a Single Environment for Distributed Testing

Reusing one development environment with versioned deployments and proxy routing, enabled by a small enablement team and cultural buy-in, scales distributed-system testing.

fromInfoQ

2 months agoGetting Feedback from Test-Driven Development and Testing in Production

Hast mentioned that they trust their unit tests and integration tests individually, and all of them together as a whole. They have no end-to-end tests: We achieved this by using good separation of concerns, modularity, abstraction, low coupling, and high cohesion. These mechanisms go hand in hand with TDD and pair programming. The result is a better domain-driven design with high code quality. Previously, they had more HTTP application integration tests that tested the whole app, but they have moved away from this (or just have some happy cases) to more focused tests that have shorter feedback loops, Hast mentioned.

Software development

fromDevOps.com

1 month agoWhat to do About AI's Forced Rethink of Reliability in Modern DevOps - DevOps.com

For years, reliability discussions have focused on uptime and whether a service met its internal SLO. However, as systems become more distributed, reliant on complex internet stacks, and integrated with AI, this binary perspective is no longer sufficient. Reliability now encompasses digital experience, speed, and business impact. For the second year in a row, The SRE Report highlights this shift.

Software development

fromMedium

1 month agoTest smart: how to solve dilemmas as QA?

To find the typical example, just observe an average stand-up meeting. The ones who talk more get all the attention. In her article, software engineer Priyanka Jain tells the story of two colleagues assigned the same task. One posted updates, asked questions, and collaborated loudly. The other stayed silent and shipped clean code. Both delivered. Yet only one was praised as a "great team player."

Software development

fromInfoQ

2 months agoWhat Testers Can Do to Ensure Software Security

A secure software development life cycle means baking security into plan, design, build, test, and maintenance, rather than sprinkling it on at the end, Sara Martinez said in her talk Ensuring Software Security at Online TestConf. Testers aren't bug finders but early defenders, building security and quality in from the first sprint. Culture first, automation second, continuous testing and monitoring all the way; that's how you make security a habit instead of a fire drill, she argued.

Software development

fromDbmaestro

4 years agoWhat is Database Delivery Automation and Why Do You Need It?

Manual database deployment means longer release times. Database specialists have to spend several working days prior to release writing and testing scripts which in itself leads to prolonged deployment cycles and less time for testing. As a result, applications are not released on time and customers are not receiving the latest updates and bug fixes. Manual work inevitably results in errors, which cause problems and bottlenecks.

Software development

fromDevOps.com

2 months agoBot-Driven Development: Redefining DevOps Workflow - DevOps.com

Industry professionals are realizing what's coming next, and it's well captured in a recent LinkedIn thread that says AI is moving on from being just a helper to a full-fledged co-developer - generating code, automating testing, managing whole workflows and even taking charge of every part of the CI/CD pipeline. Put simply, AI is transforming DevOps into a living ecosystem, one driven by close collaboration between human judgment and machine intelligence.

Software development

Software development

fromInfoWorld

1 month agoVisual Studio adds GitHub Copilot unit testing for C#

GitHub Copilot testing automatically generates, runs, and iteratively repairs unit tests, provides structured summaries and coverage insights, and supports free-form .NET prompting (paid license required).

Software development

fromDbmaestro

4 years agoIf You Don't Have Database Delivery Automation, Brace Yourself for These 10 Problems |

Manual database processes break DevOps pipelines; only 12% deploy database changes daily, causing configuration drift, frequent errors, slower time-to-market, and reduced productivity.

fromDbmaestro

4 years ago[VIDEO] End-to-End CI/CD with GitLab and DBmaestro

DBmaestro is a database release automation solution that can blend the database delivery process seamlessly into your current DevOps ecosystem with minimal fuss, and without complex installation or maintenance. Its handy database pipeline builder allows you to package, verify, and deploy, and gives you the ability to pre-run the next release in a provisional environment to detect errors early. You get a zero-friction pipeline, which is often not the case with database delivery process.

Software development

fromInfoWorld

2 months agoMicrosoft adds WinUI support to MSTest

The MSTest framework can be accessed via NuGet. With MSTest 3.4, support for WinUI framework applications is added to MSTest.Runner. With this improvement, a project sample is offered and work is under way to simplify testing of unpackaged WinUI applications. Microsoft also has improved the test runner's performance by using built-in System.Text.Json for .NET rather than Jsonite and by caching command line options.

Software development

[ Load more ]